Assessment |

Biopsychology |

Comparative |

Cognitive |

Developmental |

Language |

Individual differences |

Personality |

Philosophy |

Social |

Methods |

Statistics |

Clinical |

Educational |

Industrial |

Professional items |

World psychology |

Statistics: Scientific method · Research methods · Experimental design · Undergraduate statistics courses · Statistical tests · Game theory · Decision theory

In mathematics and statistics, a probability distribution, more properly called a probability distribution function, assigns to every interval of the real numbers a probability, so that the probability axioms are satisfied. In technical terms, a probability distribution is a probability measure whose domain is the Borel algebra on the reals.

A probability distribution is a special case of the more general notion of a probability measure, which is a function that assigns probabilities satisfying the Kolmogorov axioms to the measurable sets of a measurable space. Additionally, some authors define a distribution generally as the probability measure induced by a random variable X on its range - the probability of a set B is . However, this article discusses only probability measures over the real numbers.

Formal definition

Every random variable gives rise to a probability distribution, and this distribution contains most of the important information about the variable. If X is a random variable, the corresponding probability distribution assigns to the interval [a, b] the probability Pr[a ≤ X ≤ b], i.e. the probability that the variable X will take a value in the interval [a, b]. The probability distribution of the variable X can be uniquely described by its cumulative distribution function F(x), which is defined by

for any x in R.

A distribution is called discrete if its cumulative distribution function consists of a sequence of finite jumps, which means that it belongs to a discrete random variable X: a variable which can only attain values from a certain finite or countable set. By one convention, a distribution is called continuous if its cumulative distribution function is continuous, which means that it belongs to a random variable X for which Pr[ X = x ] = 0 for all x in R. Another convention reserves the term continuous probability distribution for absolutely continuous distributions. These can be expressed by a probability density function: a non-negative Lebesgue integrable function f defined on the real numbers such that

for all a and b. Of course, discrete distributions do not admit such a density; there also exist some continuous distributions like the devil's staircase that do not admit a density.

Discrete distribution function is expressed as -

for .

Here is called probability mass function.

- The support of a distribution is the smallest closed set whose complement has probability zero.

- The probability distribution of the sum of two independent random variables is the convolution of each of their distributions.

- The probability distribution of the difference of two random variables is the cross-correlation of each of their distributions.

List of important probability distributions

Several probability distributions are so important in theory or applications that they have been given specific names:

Discrete distributions

With finite support

- The Bernoulli distribution, which takes value 1 with probability p and value 0 with probability q = 1 − p.

- The Rademacher distribution, which takes value 1 with probability 1/2 and value −1 with probability 1/2.

- The binomial distribution describes the number of successes in a series of independent Yes/No experiments.

- The degenerate distribution at x0, where X is certain to take the value x0. This does not look random, but it satisfies the definition of random variable. This is useful because it puts deterministic variables and random variables in the same formalism.

- The discrete uniform distribution, where all elements of a finite set are equally likely. This is supposed to be the distribution of a balanced coin, an unbiased die, a casino roulette or a well-shuffled deck. Also, one can use measurements of quantum states to generate uniform random variables. All these are "physical" or "mechanical" devices, subject to design flaws or perturbations, so the uniform distribution is only an approximation of their behaviour. In digital computers, pseudo-random number generators are used to produce a statistically random discrete uniform distribution.

- The hypergeometric distribution, which describes the number of successes in the first m of a series of n Yes/No experiments, if the total number of successes is known.

- Zipf's law or the Zipf distribution. A discrete power-law distribution, the most famous example of which is the description of the frequency of words in the English language.

- The Zipf-Mandelbrot law is a discrete power law distribution which is a generalization of the Zipf distribution.

With infinite support

- The Boltzmann distribution, a discrete distribution important in statistical physics which describes the probabilities of the various discrete energy levels of a system in thermal equilibrium. It has a continuous analogue. Special cases include:

- The Gibbs distribution

- The Maxwell-Boltzmann distribution

- The Bose-Einstein distribution

- The Fermi-Dirac distribution

- The geometric distribution, a discrete distribution which describes the number of attempts needed to get the first success in a series of independent Yes/No experiments.

- The logarithmic (series) distribution

- The negative binomial distribution, a generalization of the geometric distribution to the nth success.

- The parabolic fractal distribution

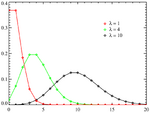

- The Poisson distribution, which describes a very large number of individually unlikely events that happen in a certain time interval.

Skellam distribution

- The Skellam distribution, the distribution of the difference between two independent Poisson-distributed random variables.

- The Yule-Simon distribution

- The zeta distribution has uses in applied statistics and statistical mechanics, and perhaps may be of interest to number theorists. It is the Zipf distribution for an infinite number of elements.

Continuous distributions

Supported on a bounded interval

- The Beta distribution on [0,1], of which the uniform distribution is a special case, and which is useful in estimating success probabilities.

- The continuous uniform distribution on [a,b], where all points in a finite interval are equally likely.

- The rectangular distribution is a uniform distribution on [-1/2,1/2].

- The Dirac delta function although not strictly a function, is a limiting form of many continuous probability functions. It represents a discrete probability distribution concentrated at 0 — a degenerate distribution — but the notation treats it as if it were a continuous distribution.

- The Kumaraswamy distribution is as versatile as the Beta distribution but has simple closed forms for both the cdf and the pdf.

- The logarithmic distribution (continuous)

- The triangular distribution on [a, b], a special case of which is the distribution of the sum of two uniformly distributed random variables (the convolution of two uniform distributions).

- The von Mises distribution on the circle.

- The von Mises-Fisher distribution on the N-dimensional sphere has the von Mises distribution

as a special case.

- The Kent distribution on the three-dimensional sphere.

- The Wigner semicircle distribution is important in the theory of random matrices.

Supported on semi-infinite intervals, usually [0,∞)

- The chi distribution

- The noncentral chi distribution

- The chi-square distribution, which is the sum of the squares of n independent Gaussian random variables. It is a special case of the Gamma distribution, and it is used in goodness-of-fit tests in statistics.

- The inverse-chi-square distribution

- The noncentral chi-square distribution

- The scale-inverse-chi-square distribution

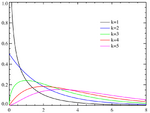

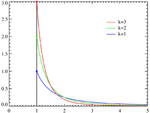

- The exponential distribution, which describes the time between consecutive rare random events in a process with no memory.

- The F-distribution, which is the distribution of the ratio of two (normalized) chi-square distributed random variables, used in the analysis of variance. (Called the beta prime distribution when it is the ratio of two chi-square variates which are not normalized by dividing them by their numbers of degrees of freedom.)

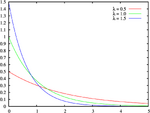

- The Gamma distribution, which describes the time until n consecutive rare random events occur in a process with no memory.

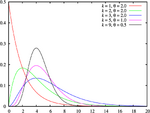

- The Erlang distribution, which is a special case of the gamma distribution with integral shape parameter, developed to predict waiting times in queuing systems.

- The inverse-gamma distribution

- The half-normal distribution

- The Lévy distribution

- The log-logistic distribution

- The log-normal distribution, describing variables which can be modelled as the product of many small independent positive variables.

- The Pareto distribution, or "power law" distribution, used in the analysis of financial data and critical behavior.

- The Pearson Type III distribution (see Pearson distributions)

- The Rayleigh distribution

- The Rayleigh mixture distribution

- The Rice distribution

- The type-2 Gumbel distribution

- The Wald distribution

- The Weibull distribution, of which the exponential distribution is a special case, is used to model the lifetime of technical devices.

Supported on the whole real line

Levy distribution

- The Cauchy distribution, an example of a distribution which does not have an expected value or a variance. In physics it is usually called a Lorentzian profile, and is associated with many processes, including resonance energy distribution, impact and natural spectral line broadening and quadratic stark line broadening.

- The Fisher-Tippett, extreme value, or log-Weibull distribution

- The Gumbel distribution, a special case of the Fisher-Tippett distribution

- Fisher's z-distribution

- The generalized extreme value distribution

- The hyperbolic distribution

- The hyperbolic secant distribution

- The Landau distribution

- The Laplace distribution

- The Lévy skew alpha-stable distribution is often used to characterize financial data and critical behavior.

- The map-Airy distribution

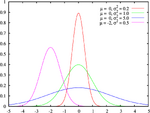

- The normal distribution, also called the Gaussian or the bell curve. It is ubiquitous in nature and statistics due to the central limit theorem: every variable that can be modelled as a sum of many small independent variables is approximately normal.

- The Pearson Type IV distribution (see Pearson distributions)

- Student's t-distribution, useful for estimating unknown means of Gaussian populations.

- The noncentral t-distribution

- The type-1 Gumbel distribution

- The Voigt distribution, or Voigt profile, is the convolution of a normal distribution and a Cauchy distribution. It is found in spectroscopy when spectral line profiles are broadened by a mixture of Lorentzian and Doppler broadening mechanisms.

Joint distributions

For any set of independent random variables the probability density function of the joint distribution is the product of the individual ones.

Two or more random variables on the same sample space

- Dirichlet distribution, a generalization of the beta distribution.

- The Ewens's sampling formula is a probability distribution on the set of all partitions of an integer n, arising in population genetics.

- Balding-Nichols Model

- multinomial distribution, a generalization of the binomial distribution.

- multivariate normal distribution, a generalization of the normal distribution.

Matrix-valued distributions

- Wishart distribution

- matrix normal distribution

- matrix t-distribution

- Hotelling's T-square distribution

Miscellaneous distributions

- The Cantor distribution

- Truncated distribution

See also

- copula (statistics)

- cumulative distribution function

- likelihood function

- list of statistical topics

- probability density function

- random variable

- histogram

|

Probability distributions [[[:Template:Tnavbar-plain-nodiv]]] | |

|---|---|---|

| Univariate | Multivariate | |

| Discrete: | Bernoulli • binomial • Boltzmann • compound Poisson • degenerate • degree • Gauss-Kuzmin • geometric • hypergeometric • logarithmic • negative binomial • parabolic fractal • Poisson • Rademacher • Skellam • uniform • Yule-Simon • zeta • Zipf • Zipf-Mandelbrot | Ewens • multinomial |

| Continuous: | Beta • Beta prime • Cauchy • chi-square • Dirac delta function • Erlang • exponential • exponential power • F • fading • Fisher's z • Fisher-Tippett • Gamma • generalized extreme value • generalized hyperbolic • generalized inverse Gaussian • Hotelling's T-square • hyperbolic secant • hyper-exponential • hypoexponential • inverse chi-square • inverse gaussian • inverse gamma • Kumaraswamy • Landau • Laplace • Lévy • Lévy skew alpha-stable • logistic • log-normal • Maxwell-Boltzmann • Maxwell speed • normal (Gaussian) • Pareto • Pearson • polar • raised cosine • Rayleigh • relativistic Breit-Wigner • Rice • Student's t • triangular • type-1 Gumbel • type-2 Gumbel • uniform • Voigt • von Mises • Weibull • Wigner semicircle | Dirichlet • Kent • matrix normal • multivariate normal • von Mises-Fisher • Wigner quasi • Wishart |

| Miscellaneous: | Cantor • conditional • exponential family • infinitely divisible • location-scale family • marginal • maximum entropy • phase-type • posterior • prior • quasi • sampling | |

External links

- Interactive Discrete and Continuous Probability Distributions

- A Compendium of Common Probability Distributions

- Statistical Distributions - Overview

- Probability Distributions

- Statistics - Distributions

- Probability Distributions in Quant Equation Archive, sitmo

| This page uses Creative Commons Licensed content from Wikipedia (view authors). |

![{\displaystyle F(x)=\Pr \left[X\leq x\right]}](https://services.fandom.com/mathoid-facade/v1/media/math/render/svg/cc2bb0ec74ee184a3d4a19d09cdc6d2c3a202222)

![{\displaystyle \Pr \left[a\leq X\leq b\right]=\int _{a}^{b}f(x)\,dx}](https://services.fandom.com/mathoid-facade/v1/media/math/render/svg/b803d4e5547f7f7f1d6e375f23edcccccf5ba11f)

![{\displaystyle F(x)=\Pr \left[X\leq x\right]=\sum _{x_{i}\leq x}p(x_{i})}](https://services.fandom.com/mathoid-facade/v1/media/math/render/svg/a3a8e8593915592dc2eacd3672df6fe8029d46c6)